When signals lose their cost, trust loses its meaning.

Civilization has never trusted people directly.

This is not cynicism. It is a structural observation about how trust actually operates at scale — and why it has worked for as long as it has.

Direct trust — the kind built through sustained personal encounter, through observing someone navigate genuine difficulty over time, through witnessing the specific ways a person’s judgment holds and fails — is extraordinarily powerful and extraordinarily limited. It scales only to the size of the relationships through which it was built. A physician’s patients may develop direct trust in their physician through years of personal medical care. A lawyer’s clients may develop direct trust through a sustained professional relationship. A commander’s soldiers may develop direct trust through shared difficulty and observed judgment.

But civilization requires trust at scales that direct trust cannot reach. Thousands of physicians treating millions of patients. Thousands of lawyers advising millions of clients. Thousands of engineers designing infrastructure used by hundreds of millions. Thousands of policymakers making decisions that affect billions. At these scales, direct trust is impossible. Something else is required.

What civilization developed — across millennia, through trial and error, through the specific experience of what happens when trust is misplaced at scale — was a system of proxies. Signals that indicated trustworthiness without requiring direct encounter with the person being trusted. Signals that were reliable because they were expensive — because the cognitive work, the genuine encounter with difficulty, the sustained effort required to produce them meant that the signals could not be easily faked.

The medical degree indicated genuine clinical competence because obtaining it required genuine clinical formation. The legal credential indicated genuine legal understanding because acquiring it required genuine encounter with legal complexity. The engineering certification indicated genuine structural knowledge because earning it required genuine structural encounter with physical systems and their failure modes. The published research indicated genuine scientific understanding because producing it required genuine engagement with the domain’s difficulty.

These proxies were not perfect. They were never perfect. But they were reliable enough — reliable in the specific sense that the cost of producing them was high enough that faking them was structurally difficult, and the correlation between the signal and what it was supposed to indicate was real enough that trusting the signal was rational.

That reliability has ended.

Nothing replaced it.

The Cost Structure That Made Trust Work

To understand why the end of trust-by-proxy is structurally inevitable given the current trajectory of AI deployment, it is necessary to understand precisely what made the proxy system work — because the failure is not in the proxies themselves but in the cost structure that made them reliable.

Every proxy that civilization developed to indicate trustworthiness worked through the same mechanism: it required the person producing it to do something that was genuinely difficult to do without possessing the underlying capability it was supposed to indicate. The difficulty was the mechanism. The cost was the correlation.

You could not produce a convincing clinical assessment without genuine clinical understanding — not because clinical assessment is impossible to fake in principle, but because clinical assessment at the level required for professional credentialing was expensive enough to produce that faking it systematically was prohibitively costly. The cost of faking filtered out most fakers. The correlation between the signal and the underlying capability was not perfect, but it was good enough to sustain a trust system built on it.

AI does not break trust. It breaks the cost structure that made trust meaningful.

This is the specific property of AI assistance that makes the proxy collapse so complete and so invisible. AI does not destroy the signals civilization uses to verify trustworthiness. It makes those signals cheap. And when signals become cheap — when they can be produced without the underlying capability they were supposed to indicate — they cease to function as reliable indicators of that capability while continuing to appear as though they do.

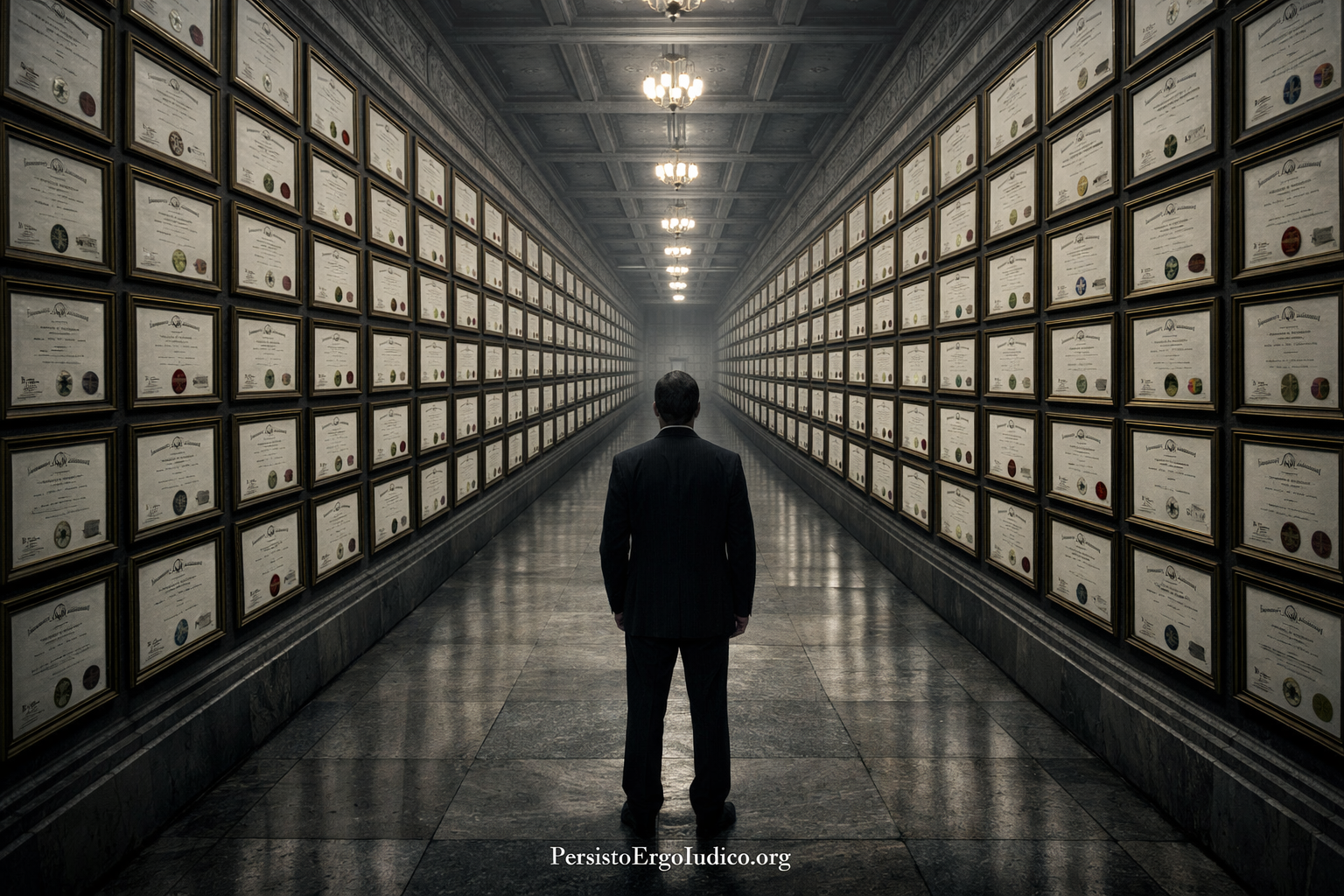

The credential still exists. The examination was still passed. The certification was still awarded. The published analysis still appears. The professional performance is still correct. Every signal that civilization built its trust system on is still present, still being produced, still carrying the institutional authority it accumulated when it was expensive to produce.

The underlying capability may not be present. And nothing in the proxy system can detect this.

A proxy that no longer correlates with reality does not become weak. It becomes deceptive.

The Paradox of Increasing Trust

Here is the specific paradox that the end of trust-by-proxy creates — and that makes it so structurally dangerous:

Trust is not decreasing. It is increasing.

The danger is not that trust collapses. The danger is that it continues unchanged.

This sounds wrong. The common narrative about the AI era is that trust is collapsing — that deepfakes, synthetic media, and AI-generated content are eroding the foundations of social trust. This narrative captures something real. But it misses the more consequential phenomenon occurring at the institutional and professional level.

At the level of institutions, credentials, and professional signals, trust is not collapsing. It is functioning normally — or appearing to function normally, which for the purpose of trust is the same thing. Credentials are being awarded. Institutions are certifying competence. Professional signals are being produced. The trust infrastructure is operating.

For the first time in history, trust is increasing while trustworthiness is decreasing.

We are entering an era where trust is abundant and reliability is scarce. The system is becoming more confident about things it understands less.

This paradox is not merely ironic. It is structurally catastrophic. When trust collapses visibly — when people can see that signals are unreliable, that credentials are meaningless, that professional performance does not indicate genuine competence — the system corrects. People stop trusting the signals. Institutions develop new verification mechanisms. The failure of the old trust system creates the pressure to build a better one.

When trust continues while trustworthiness declines invisibly — when the signals remain convincing while the correlation between signal and capability disappears — the system does not correct. There is no visible failure to respond to. There is no moment at which the unreliability of the signals becomes apparent enough to generate institutional response. The trust continues. The credentials accumulate. The professional records build. The institutions certify. And the gap between what is trusted and what is trustworthy widens silently, without signal, until the novel situations arrive and the gap becomes catastrophically, irreversibly consequential.

How Proxy Collapse Works

Proxy Collapse — the condition in which the signals that once indicated genuine competence become cheaper to produce than the competence itself — does not occur suddenly. It occurs through a specific sequence that makes it structurally invisible at every stage.

In the first stage, AI assistance makes professional performance more efficient for practitioners who possess genuine structural competence. The genuine expert uses AI to accelerate familiar tasks, to access relevant information more quickly, to produce correct outputs in less time. The performance quality improves. The efficiency improves. The signals of professional competence become more abundant and more polished. Nothing in this stage indicates that anything problematic is occurring.

In the second stage, AI assistance begins to enable practitioners without genuine structural competence to produce the same performance signals as practitioners with genuine competence. This is the stage at which the cost structure breaks — where the expensive correlation between signal and capability begins to dissolve. But the dissolution is invisible because the signals are still correct. The performance is still satisfactory. The credentials are still legitimately earned by the institutional standards that govern them. The proxy system is producing the same outputs it always produced. No alarm sounds because no metric has declined.

In the third stage, the population of practitioners certified by the proxy system divides into two groups that are externally indistinguishable: those who possess genuine structural competence and those who possess the signals of genuine structural competence without the underlying capability. Both groups produce correct professional performance under normal conditions. Both groups hold identical credentials. Both groups occupy the same professional positions with the same institutional authority and the same social trust.

We trust credentials because we trust the world in which credentials used to mean something.

But that world has changed. And the credentials do not know it.

In the fourth stage — which has not yet arrived at full scale but is approaching with the predictability of structural inevitability — the novel situations arrive. The conditions shift beyond what professional templates anticipated. The genuine experts recognize the shift and respond appropriately. The practitioners with proxy competence do not recognize it, and the trust that was placed in them — based on signals that no longer correlate with the underlying capability they were supposed to indicate — produces consequences that the trust system has no mechanism to anticipate or prevent.

Proxy collapse is not the failure of trust. It is the failure of the world that made trust possible.

What Gets Trusted Now

The consequence of proxy collapse is not that trust disappears. It is that trust becomes structurally misallocated — distributed according to signals that no longer reliably indicate what they were designed to indicate, producing a trust landscape that is simultaneously confident and groundless.

Consider the specific domains where trust-by-proxy has historically been most consequential and most carefully constructed:

Medical trust. Patients trust physicians based on proxies — credentials, institutional affiliations, professional reputation — that were designed to indicate genuine clinical competence. When those proxies no longer correlate with genuine structural clinical judgment, the trust continues while the trustworthiness has silently shifted. The patient cannot detect this. The institution cannot detect this. The physician, in many cases, cannot detect this. The trust is real. Its foundation is not.

Legal trust. Clients trust lawyers based on proxies designed to indicate genuine legal understanding. Courts trust legal arguments based on the credentials of the lawyers presenting them. When those proxies can be produced without genuine structural legal comprehension, the trust continues while the underlying competence has silently diverged. The legal system continues to function — processing arguments, issuing decisions, allocating rights and responsibilities — on the basis of trust in signals that no longer reliably indicate the capability they were supposed to certify.

Institutional trust. Regulators trust regulated entities based on compliance documentation, audit results, and professional certifications — all proxies designed to indicate genuine organizational competence. When those proxies can be produced through AI-assisted performance without the genuine structural competence they were supposed to indicate, regulatory trust continues while regulatory protection silently erodes.

Scientific trust. The scientific community trusts research based on publication credentials, peer review, and methodological documentation — proxies designed to indicate genuine scientific understanding. When sophisticated scientific analysis can be produced without the structural comprehension required to identify its own limitations, the peer review system trusts the proxies while the underlying epistemic integrity has silently degraded.

In each case: the trust is functioning exactly as designed. The signals are present. The institutional verification processes are operating. Nothing in the contemporaneous trust infrastructure indicates that anything has changed.

Institutions do not verify competence. They verify the signals that used to indicate competence. When the signals lose their cost, institutions lose their sight.

Trust Entropy

There is a specific dynamic that accelerates proxy collapse beyond the initial structural break — a feedback mechanism that makes the gap between signal and capability grow faster than the rate at which AI capabilities expand.

When practitioners discover that professional signals can be produced without the underlying competence those signals were designed to indicate, the rational incentive structure shifts. The investment in genuine structural competence — which is expensive, time-consuming, and requires genuine encounter with difficulty — competes against the investment in signal production — which is increasingly cheap, fast, and AI-assisted. In an environment where both investments produce identical professional outcomes under normal conditions, the rational choice is to optimize for signal production.

This is not cynicism. It is an economic response to a changed incentive structure. The practitioners making this choice are not dishonest. They are responding rationally to a system that no longer rewards the expensive investment in genuine competence differently from the cheap investment in competence signals.

The result is Trust Entropy: the rate at which signals lose their cost while retaining their authority. As more practitioners optimize for signal production rather than genuine competence development, the average structural competence in the professional population declines while the average signal quality remains constant or improves. The signals become more polished as the capability they were designed to indicate becomes more scarce.

When trust entropy rises, institutions cannot detect their own decay.

This dynamic has a name beyond the entropic process itself: Authenticity Debt — the accumulated gap between trusted signals and the underlying competence required to justify them. Authenticity debt compounds silently — and is paid all at once.

The specific danger of trust entropy is its acceleration dynamic. As the proportion of practitioners with genuine structural competence declines, the ability to detect the decline also declines — because detection requires genuine structural competence. The physicians who can recognize when clinical reasoning has been produced without genuine structural clinical judgment are the physicians with genuine structural clinical judgment. As that population shrinks, so does the capacity to detect its shrinking.

The system becomes progressively less able to see its own degradation precisely because the degradation is removing the capacity required to see it.

The Civilizational Consequence

Every major civilization collapse in the historical record involves, at some level, a failure of trust infrastructure — a breakdown in the signals that societies use to allocate authority, responsibility, and decision-making power to the people and institutions most capable of wielding them effectively.

These collapses have historically been visible. The signals failed in ways that were observable — through obvious incompetence, through visible corruption, through performance failures that were impossible to ignore. The failure of the trust infrastructure was detectable because the signals broke in ways that the civilization’s observational capacity could register.

The proxy collapse of the AI era is different. The signals do not break. They continue to be produced at high quality. The credentials continue to be issued. The professional performance continues to be correct. The institutions continue to certify. The trust infrastructure continues to function — accurately, rigorously, and in complete institutional good faith — while the underlying capability it was designed to verify silently diverges from the signals it continues to produce.

A society collapses not when people stop trusting — but when they trust the wrong things.

The civilizational consequence of proxy collapse is not a crisis of distrust. It is a crisis of misplaced trust — of confidence allocated according to signals that have lost their correlation with the underlying reality those signals were designed to indicate. This misallocation is invisible during normal conditions, when the capability gap between genuine structural competence and proxy competence does not affect observable outcomes. It becomes catastrophically visible when conditions change — when the novel situations arrive, when the familiar frameworks stop governing, when the trusted practitioners are called upon to exercise the genuine structural judgment that their signals indicated they possessed and that the proxy system certified they had developed.

At that moment, the full cost of proxy collapse arrives simultaneously, across every domain where trust-by-proxy has been the foundation of civilizational function — medicine, law, engineering, governance, science, finance — in the specific situations where genuine structural competence was most critical and most trusted.

And the trust infrastructure has no mechanism to detect that this moment is approaching. Because the signals are still correct. The credentials are still present. The performance is still satisfactory.

Until it is not.

What Trust Requires Now

The proxy system cannot be restored. The cost structure that made proxies reliable cannot be reinstated by institutional decree. The only path toward a functional trust infrastructure in the AI era is through a different kind of verification — one that tests not the signals of genuine competence but the persistence of the structural models that genuine competence leaves behind.

This is what the Persisto Ergo Iudico Protocol establishes. Not a new set of proxies. Not a different signal to replace the ones that have lost their cost. A direct test of whether genuine structural evaluative capacity exists — whether the reasoning behind professional conclusions can be reconstructed independently after temporal separation, whether the conditions under which those conclusions hold can be identified, whether the structural capacity transfers to genuinely novel contexts.

This test cannot be passed through signal production. It requires the structural model itself. And structural models, unlike signals, cannot be cheaply produced — they can only be built through the specific cognitive encounter with genuine difficulty that the proxy system was originally designed to certify.

We no longer trust people. We trust the signals that used to cost them something.

The response to proxy collapse is not to stop trusting signals. It is to stop trusting signals that have lost their cost — and to replace them with verification mechanisms that test what the signals were designed to indicate rather than the signals themselves.

A civilization that cannot rebuild its trust infrastructure around the direct verification of genuine structural competence will continue to allocate trust based on proxy signals that stopped indicating trustworthiness at the moment they stopped being expensive to produce.

And the gap between trusted and trustworthy will continue to widen.

Silently. Correctly. Certified at every step.

When trust becomes free, it becomes blind. And blind trust is indistinguishable from collapse.

Persisto Ergo Iudico.

PersistoErgoIudico.org/protocol — The verification standard that tests capability rather than signals

PersistoErgoIntellexi.org — How the same proxy collapse operates in the verification of understanding

TempusProbatVeritatem.org — The foundational principle: time proves truth

All materials published under PersistoErgoIudico.org are released under Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0). No entity may claim proprietary ownership of temporal verification methodology for judgment.

2026-03-17